完整搭建

完整搭建

# 在 Kubernetes 中部署 EFK+kafka

Kubernetes 中比较流行的日志收集解决方案是 Elasticsearch、Fluentd 和 Kibana(EFK)技术栈,也是官方现在比较推荐的一种方案。

- Elasticsearch 是一个实时的、分布式的可扩展的搜索引擎,允许进行全文、结构化搜索,它通常用于索引和搜索大量日志数据,也可用于搜索许多不同类型的文档。

- Kibana 是 Elasticsearch 的一个功能强大的数据可视化 Dashboard,Kibana 允许你通过 web 界面来浏览 Elasticsearch 日志数据。

- Fluentd 是一个流行的开源数据收集器,我们将在 Kubernetes 集群节点上安装 Fluentd,通过获取容器日志文件、过滤和转换日志数据,然后将数据传递到 Elasticsearch 集群,在该集群中对其进行索引和存储。

正常情况下,上面这种方案就足够我们使用,但是如果集群日志太多,ES 不堪重负,我们就需要接入中间件来缓冲数据,对于这些中间件来说 kafka 和 redis 无疑是我们的首选方案。我们这里采用了 kafka,我们追求一切容器化,所以将这些组件全部都部署在 Kubernetes 中。

注:

(1)、我们将所有的组件都部署在一个单独的 namespace 中,我这里是新建了一个 kube-ops 的 namespace;

(2)、集群部署到分布式存储,可选 ceph,NFS 等,我这里采用的 NFS,如果你和我一样使用 NFS 并且不会搭建,可以参考https://www.qikqiak.com/k8s-book/docs/35.StorageClass.html (opens new window);

# 创建 Namespace

首先创建一个 Namespace,可以使用命令,如下:

kubectl create ns kube-ops

也可以使用 YAML 清单,如下(efk-ns.yaml):

apiVersion: v1

kind: Namespace

metadata:

name: kube-ops

2

3

4

如果使用清单,需要创建清单文件:

kubectl apply -f efk-ns.yaml

# 部署 Elasticsearch

首先我们来部署 Elasticsearch 集群。

开始部署 3 个节点的 ElasticSearch。其中关键点是应该设置 discover.zen.minimum_master_nodes=N/2+1,其中 N 是 Elasticsearch 集群中符合主节点的节点数,比如我们这里 3 个节点,意味着 N 应该设置为 2。这样,如果一个节点暂时与集群断开连接,则另外两个节点可以选择一个新的主节点,并且集群可以在最后一个节点尝试重新加入时继续运行,在扩展 Elasticsearch 集群时,一定要记住这个参数。

(1)、创建 elasticsearch 无头服务(elasticsearch-svc.yaml)

apiVersion: v1

kind: Service

metadata:

name: elasticsearch

namespace: kube-ops

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

clusterIP: None

ports:

- name: rest

port: 9200

- name: inter-node

port: 9300

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

定义为无头服务,是因为我们后面真正部署 elasticsearch 的 pod 是通过 statefulSet 部署的,到时候将其进行关联,另外 9200 是 REST API 端口,9300 是集群间通信端口。

然后我们创建这个资源对象。

## kubectl apply -f elasticsearch-svc.yaml

service/elasticsearch created

## kubectl get svc -n kube-ops

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 9s

2

3

4

5

(2)、用 StatefulSet 部署 Elasticsearch,配置清单如下(elasticsearch-elasticsearch.yaml ):

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: es-cluster

namespace: kube-ops

spec:

serviceName: elasticsearch

replicas: 3

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

containers:

- name: elasticsearch

image: docker.elastic.co/elasticsearch/elasticsearch-oss:6.4.3

resources:

limits:

cpu: 1000m

requests:

cpu: 1000m

ports:

- containerPort: 9200

name: rest

protocol: TCP

- containerPort: 9300

name: inter-node

protocol: TCP

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

env:

- name: cluster.name

value: k8s-logs

- name: node.name

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: discovery.zen.ping.unicast.hosts

value: "es-cluster-0.elasticsearch,es-cluster-1.elasticsearch,es-cluster-2.elasticsearch"

- name: discovery.zen.minimum_master_nodes

value: "2"

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

initContainers:

- name: fix-permissions

image: busybox

command:

["sh", "-c", "chown -R 1000:1000 /usr/share/elasticsearch/data"]

securityContext:

privileged: true

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

- name: increase-vm-max-map

image: busybox

command: ["sysctl", "-w", "vm.max_map_count=262144"]

securityContext:

privileged: true

- name: increase-fd-ulimit

image: busybox

command: ["sh", "-c", "ulimit -n 65536"]

securityContext:

privileged: true

volumeClaimTemplates:

- metadata:

name: data

labels:

app: elasticsearch

annotations:

volume.beta.kubernetes.io/storage-class: es-data-db

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: es-data-db

resources:

requests:

storage: 10Gi

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

配置清单说明:

上面 Pod 中定义了两种类型的 container,普通的 container 和 initContainer。其中在 initContainer 种它有 3 个 container,它们会在所有容器启动前运行。

- 名为 fix-permissions 的 container 的作用是将 Elasticsearch 数据目录的用户和组更改为 1000:1000(Elasticsearch 用户的 UID)。因为默认情况下,Kubernetes 用 root 用户挂载数据目录,这会使得 Elasticsearch 无法方法该数据目录。

- 名为 increase-vm-max-map 的容器用来增加操作系统对 mmap 计数的限制,默认情况下该值可能太低,导致内存不足的错误

- 名为 increase-fd-ulimit 的容器用来执行 ulimit 命令增加打开文件描述符的最大数量

在普通 container 中,我们定义了名为 elasticsearch 的 container,然后暴露了 9200 和 9300 两个端口,注意名称要和上面定义的 Service 保持一致。然后通过 volumeMount 声明了数据持久化目录,下面我们再来定义 VolumeClaims。最后就是我们在容器中设置的一些环境变量了:

- cluster.name:Elasticsearch 集群的名称,我们这里命名成 k8s-logs;

- node.name:节点的名称,通过 metadata.name 来获取。这将解析为 es-cluster-[0,1,2],取决于节点的指定顺序;

- discovery.zen.ping.unicast.hosts:此字段用于设置在 Elasticsearch 集群中节点相互连接的发现方法。我们使用 unicastdiscovery 方式,它为我们的集群指定了一个静态主机列表。由于我们之前配置的无头服务,我们的 Pod 具有唯一的 DNS 域 es-cluster-[0,1,2].elasticsearch.logging.svc.cluster.local,因此我们相应地设置此变量。由于都在同一个 namespace 下面,所以我们可以将其缩短为 es-cluster-[0,1,2].elasticsearch;

- discovery.zen.minimum_master_nodes:我们将其设置为(N/2) + 1,N 是我们的群集中符合主节点的节点的数量。我们有 3 个 Elasticsearch 节点,因此我们将此值设置为 2(向下舍入到最接近的整数);

- ES_JAVA_OPTS:这里我们设置为-Xms512m -Xmx512m,告诉 JVM 使用 512 MB 的最小和最大堆。您应该根据群集的资源可用性和需求调整这些参数;

(3)、定义一个 StorageClass(elasticsearch-storage.yaml)

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: es-data-db

provisioner: rookieops/nfs

2

3

4

5

注意:由于我们这里采用的是 NFS 来存储,所以上面的 provisioner 需要和我们 nfs-client-provisoner 中保持一致。

然后我们创建资源:

## kubectl apply -f elasticsearch-storage.yaml

## kubectl apply -f elasticsearch-elasticsearch.yaml

## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

dingtalk-hook-8497494dc6-s6qkh 1/1 Running 0 16m

es-cluster-0 1/1 Running 0 10m

es-cluster-1 1/1 Running 0 10m

es-cluster-2 1/1 Running 0 9m20s

## kubectl get pvc -n kube-ops

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

data-es-cluster-0 Bound pvc-9f15c0f8-60a8-485d-b650-91fb8f5f8076 10Gi RWO es-data-db 18m

data-es-cluster-1 Bound pvc-503828ec-d98e-4e94-9f00-eaf6c05f3afd 10Gi RWO es-data-db 11m

data-es-cluster-2 Bound pvc-3d2eb82e-396a-4eb0-bb4e-2dd4fba8600e 10Gi RWO es-data-db 10m

## kubectl get svc -n kube-ops

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dingtalk-hook ClusterIP 10.68.122.48 <none> 5000/TCP 18m

elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 19m

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

测试:

## kubectl port-forward es-cluster-0 9200:9200 --namespace=kube-ops

Forwarding from 127.0.0.1:9200 -> 9200

Forwarding from [::1]:9200 -> 9200

Handling connection for 9200

2

3

4

如果看到如下结果,就表示服务正常:

## curl http://localhost:9200/_cluster/state?pretty

{

"cluster_name": "k8s-logs",

"compressed_size_in_bytes": 337,

"cluster_uuid": "nzc4y-eDSuSaYU1TigFAWw",

"version": 3,

"state_uuid": "6Mvd-WTPT0e7WMJV23Vdiw",

"master_node": "KRyMrbS0RXSfRkpS0ZaarQ",

"blocks": {},

"nodes":

{

"XGP4TrkrQ8KNMpH3pQlaEQ":

{

"name": "es-cluster-2",

"ephemeral_id": "f-R_IyfoSYGhY27FmA41Tg",

"transport_address": "172.20.1.104:9300",

"attributes": {},

},

"KRyMrbS0RXSfRkpS0ZaarQ":

{

"name": "es-cluster-0",

"ephemeral_id": "FpTnJTR8S3ysmoZlPPDnSg",

"transport_address": "172.20.1.102:9300",

"attributes": {},

},

"Xzjk2n3xQUutvbwx2h7f4g":

{

"name": "es-cluster-1",

"ephemeral_id": "FKjRuegwToe6Fz8vgPmSNw",

"transport_address": "172.20.1.103:9300",

"attributes": {},

},

},

"metadata":

{

"cluster_uuid": "nzc4y-eDSuSaYU1TigFAWw",

"templates": {},

"indices": {},

"index-graveyard": { "tombstones": [] },

},

"routing_table": { "indices": {} },

"routing_nodes":

{

"unassigned": [],

"nodes":

{

"KRyMrbS0RXSfRkpS0ZaarQ": [],

"XGP4TrkrQ8KNMpH3pQlaEQ": [],

"Xzjk2n3xQUutvbwx2h7f4g": [],

},

},

"snapshots": { "snapshots": [] },

"restore": { "snapshots": [] },

"snapshot_deletions": { "snapshot_deletions": [] },

}

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

到此,Elasticsearch 部署完成。

# 部署 kibana

对于 kibana,它只是一个展示工具,所以我们用 Deployment 部署即可。

(1)、定义 kibana service 的配置清单(kibana-svc.yaml)

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: kube-ops

labels:

app: kibana

spec:

ports:

- port: 5601

type: NodePort

selector:

app: kibana

2

3

4

5

6

7

8

9

10

11

12

13

我们这里配置的 Service 是采用 NodePort 类型,当然也可以采用 ingress,推荐使用 ingress。

(2)、定义 kibana Deployment 配置清单(kibana-deploy.yaml)

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: kube-ops

labels:

app: kibana

spec:

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

containers:

- name: kibana

image: docker.elastic.co/kibana/kibana-oss:6.4.3

resources:

limits:

cpu: 1000m

requests:

cpu: 100m

env:

- name: ELASTICSEARCH_URL

value: http://elasticsearch:9200

ports:

- containerPort: 5601

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

创建配置清单:

## kubectl apply -f kibana.yaml

service/kibana created

deployment.apps/kibana created

## kubectl get svc -n kube-ops

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dingtalk-hook ClusterIP 10.68.122.48 <none> 5000/TCP 47m

elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 48m

kibana NodePort 10.68.221.60 <none> 5601:26575/TCP 7m29s

[root@ecs-5704-0003 storage]## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

dingtalk-hook-8497494dc6-s6qkh 1/1 Running 0 47m

es-cluster-0 1/1 Running 0 41m

es-cluster-1 1/1 Running 0 41m

es-cluster-2 1/1 Running 0 40m

kibana-7fc9f8c964-68xbh 1/1 Running 0 7m41s

2

3

4

5

6

7

8

9

10

11

12

13

14

15

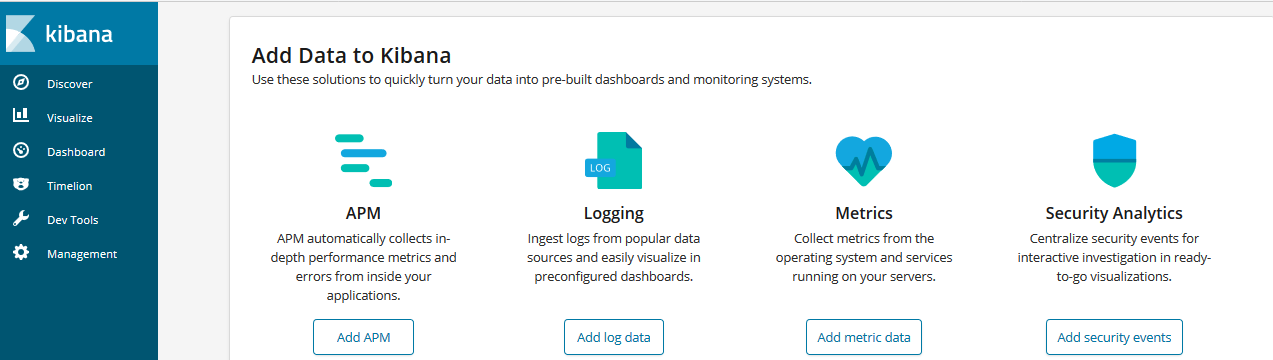

16

如果看到一下界面,表示你的 kibana 部署完成。

# 部署 kafka

Apache Kafka 是一个分布式发布 - 订阅消息系统和一个强大的队列,可以处理大量的数据,并使您能够将消息从一个端点传递到另一个端点。 Kafka 适合离线和在线消息消费。 Kafka 消息保留在磁盘上,并在群集内复制以防止数据丢失。

以下是 Kafka 的几个好处 :

- 可靠性 - Kafka 是分布式,分区,复制和容错的。

- 可扩展性 - Kafka 消息传递系统轻松缩放,无需停机。

- 耐用性 - Kafka 使用分布式提交日志,这意味着消息会尽可能快地保留在磁盘上,因此它是持久的。

- 性能 - Kafka 对于发布和订阅消息都具有高吞吐量。 即使存储了许多 TB 的消息,它也保持稳定的性能。

Kafka 的一个关键依赖是 Zookeeper,它是一个分布式配置和同步服务, Zookeeper 是 Kafka 代理和消费者之间的协调接口, Kafka 服务器通过 Zookeeper 集群共享信息。 Kafka 在 Zookeeper 中存储基本元数据,例如关于主题,代理,消费者偏移(队列读取器)等的信息。

由于所有关键信息存储在 Zookeeper 中,并且它通常在其整体上复制此数据,因此 Kafka 代理/ Zookeeper 的故障不会影响 Kafka 集群的状态,另外 Kafka 代理之间的领导者选举也通过使用 Zookeeper 在领导者失败的情况下完成的。

# 部署 zookeeper

(1)、定义 ZK 的 storageClass(zookeeper-storage.yaml)

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: zk-data-db

provisioner: rookieops/nfs

2

3

4

5

(2)、定义 ZK 的 headless service(zookeeper-svc.yaml)

apiVersion: v1

kind: Service

metadata:

name: zk-svc

namespace: kube-ops

labels:

app: zk-svc

spec:

ports:

- port: 2888

name: server

- port: 3888

name: leader-election

clusterIP: None

selector:

app: zk

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

(3)、定义 ZK 的 configMap(zookeeper-config.yaml)

apiVersion: v1

kind: ConfigMap

metadata:

name: zk-cm

namespace: kube-ops

data:

jvm.heap: "1G"

tick: "2000"

init: "10"

sync: "5"

client.cnxns: "60"

snap.retain: "3"

purge.interval: "0"

2

3

4

5

6

7

8

9

10

11

12

13

(4)、定义 ZK 的 PodDisruptionBudget(zookeeper-pdb.yaml)

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: zk-pdb

namespace: kube-ops

spec:

selector:

matchLabels:

app: zk

minAvailable: 2

2

3

4

5

6

7

8

9

10

说明:PodDisruptionBudget 的作用就是为了保证业务不中断或者业务 SLA 不降级。通过 PodDisruptionBudget 控制器可以设置应用 POD 集群处于运行状态最低个数,也可以设置应用 POD 集群处于运行状态的最低百分比,这样可以保证在主动销毁应用 POD 的时候,不会一次性销毁太多的应用 POD,从而保证业务不中断或业务 SLA 不降级。

(5)、定义 ZK 的 statefulSet(zookeeper-statefulset.yaml)

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: zk

namespace: kube-ops

spec:

serviceName: zk-svc

replicas: 3

template:

metadata:

labels:

app: zk

spec:

containers:

- name: k8szk

imagePullPolicy: Always

image: registry.cn-hangzhou.aliyuncs.com/rookieops/zookeeper:3.4.10

resources:

requests:

memory: "2Gi"

cpu: "500m"

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: server

- containerPort: 3888

name: leader-election

env:

- name: ZK_REPLICAS

value: "3"

- name: ZK_HEAP_SIZE

valueFrom:

configMapKeyRef:

name: zk-cm

key: jvm.heap

- name: ZK_TICK_TIME

valueFrom:

configMapKeyRef:

name: zk-cm

key: tick

- name: ZK_INIT_LIMIT

valueFrom:

configMapKeyRef:

name: zk-cm

key: init

- name: ZK_SYNC_LIMIT

valueFrom:

configMapKeyRef:

name: zk-cm

key: tick

- name: ZK_MAX_CLIENT_CNXNS

valueFrom:

configMapKeyRef:

name: zk-cm

key: client.cnxns

- name: ZK_SNAP_RETAIN_COUNT

valueFrom:

configMapKeyRef:

name: zk-cm

key: snap.retain

- name: ZK_PURGE_INTERVAL

valueFrom:

configMapKeyRef:

name: zk-cm

key: purge.interval

- name: ZK_CLIENT_PORT

value: "2181"

- name: ZK_SERVER_PORT

value: "2888"

- name: ZK_ELECTION_PORT

value: "3888"

command:

- sh

- -c

- zkGenConfig.sh && zkServer.sh start-foreground

readinessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 10

timeoutSeconds: 5

livenessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 10

timeoutSeconds: 5

volumeMounts:

- name: datadir

mountPath: /var/lib/zookeeper

volumeClaimTemplates:

- metadata:

name: datadir

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: zk-data-db

resources:

requests:

storage: 1Gi

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

然后创建配置清单:

## kubectl apply -f zookeeper-storage.yaml

## kubectl apply -f zookeeper-svc.yaml

## kubectl apply -f zookeeper-config.yaml

## kubectl apply -f zookeeper-pdb.yaml

## kubectl apply -f zookeeper-statefulset.yaml

## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

zk-0 1/1 Running 0 12m

zk-1 1/1 Running 0 12m

zk-2 1/1 Running 0 11m

2

3

4

5

6

7

8

9

10

然后查看集群状态:

## for i in 0 1 2; do kubectl exec -n kube-ops zk-$i zkServer.sh status; done

ZooKeeper JMX enabled by default

Using config: /usr/bin/../etc/zookeeper/zoo.cfg

Mode: follower

ZooKeeper JMX enabled by default

Using config: /usr/bin/../etc/zookeeper/zoo.cfg

Mode: follower

ZooKeeper JMX enabled by default

Using config: /usr/bin/../etc/zookeeper/zoo.cfg

Mode: leader

2

3

4

5

6

7

8

9

10

# 部署 Kafka

(1)、制作镜像,Dokcerfile 如下:

kafka 下载地址:wget https://www-us.apache.org/dist/kafka/2.2.0/kafka_2.11-2.2.0.tgz (opens new window)

FROM centos:centos7

LABEL "auth"="rookieops" \

"mail"="rookieops@163.com"

ENV TIME_ZONE Asia/Shanghai

## install JAVA

ADD jdk-8u131-linux-x64.tar.gz /opt/

ENV JAVA_HOME /opt/jdk1.8.0_131

ENV PATH ${JAVA_HOME}/bin:${PATH}

## install kafka

ADD kafka_2.11-2.3.1.tgz /opt/

RUN mv /opt/kafka_2.11-2.3.1 /opt/kafka

WORKDIR /opt/kafka

EXPOSE 9092

CMD ["./bin/kafka-server-start.sh", "config/server.properties"]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

然后 docker build,docker push 到镜像仓库(操作略)。

(2)、定义 kafka 的 storageClass(kafka-storage.yaml )

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: kafka-data-db

provisioner: rookieops/nfs

2

3

4

5

(3)、定义 kafka headless Service(kafka-svc.yaml)

apiVersion: v1

kind: Service

metadata:

name: kafka-svc

namespace: kube-ops

labels:

app: kafka

spec:

selector:

app: kafka

clusterIP: None

ports:

- name: server

port: 9092

2

3

4

5

6

7

8

9

10

11

12

13

14

(4)、定义 kafka 的 configMap(kafka-config.yaml)

apiVersion: v1

kind: ConfigMap

metadata:

name: kafka-config

namespace: kube-ops

data:

server.properties: |

broker.id=${HOSTNAME##*-}

listeners=yamlTEXT://:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/data/kafka/logs

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=zk-0.zk-svc.kube-ops.svc.cluster.local:2181,zk-1.zk-svc.kube-ops.svc.cluster.local:2181,zk-2.zk-svc.kube-ops.svc.cluster.local:2181

zookeeper.connection.timeout.ms=6000

group.initial.rebalance.delay.ms=0

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

(5)、定义 kafka 的 statefuleSet 配置清单(kafka.yaml)

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

namespace: kube-ops

spec:

serviceName: kafka-svc

replicas: 3

selector:

matchLabels:

app: kafka

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zk

topologyKey: "kubernetes.io/hostname"

terminationGracePeriodSeconds: 300

containers:

- name: kafka

image: registry.cn-hangzhou.aliyuncs.com/rookieops/kafka:2.3.1-beta

imagePullPolicy: Always

resources:

requests:

cpu: 500m

memory: 1Gi

limits:

cpu: 500m

memory: 1Gi

command:

- "/bin/sh"

- "-c"

- "./bin/kafka-server-start.sh config/server.properties --override broker.id=${HOSTNAME##*-}"

ports:

- name: server

containerPort: 9092

volumeMounts:

- name: config

mountPath: /opt/kafka/config/server.properties

subPath: server.properties

- name: data

mountPath: /data/kafka/logs

volumes:

- name: config

configMap:

name: kafka-config

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: kafka-data-db

resources:

requests:

storage: 10Gi

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

创建配置清单:

## kubectl apply -f kafka-storage.yaml

## kubectl apply -f kafka-svc.yaml

## kubectl apply -f kafka-config.yaml

## kubectl apply -f kafka.yaml

## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

kafka-0 1/1 Running 0 13m

kafka-1 1/1 Running 0 13m

kafka-2 1/1 Running 0 10m

zk-0 1/1 Running 0 77m

zk-1 1/1 Running 0 77m

zk-2 1/1 Running 0 76m

2

3

4

5

6

7

8

9

10

11

12

测试:

(1)、进入一个 container,创建 topic,并开启 consumer 等待 producer 生产数据

## kubectl exec -it -n kube-ops kafka-0 -- /bin/bash

$ cd /opt/kafka

$ ./bin/kafka-topics.sh --create --topic test --zookeeper zk-0.zk-svc.kube-ops.svc.cluster.local:2181,zk-1.zk-svc.kube-ops.svc.cluster.local:2181,zk-2.zk-svc.kube-ops.svc.cluster.local:2181 --partitions 3 --replication-factor 2

Created topic "test".

## 消费

$ ./bin/kafka-console-consumer.sh --topic test --bootstrap-server localhost:9092

2

3

4

5

6

(2)、再进入另一个 container 做 producer:

## kubectl exec -it -n kube-ops kafka-1 -- /bin/bash

$ cd /opt/kafka

$ ./bin/kafka-console-producer.sh --topic test --broker-list localhost:9092

hello

nihao

2

3

4

5

可以看到 consumer 上会产生消费信息:

$ ./bin/kafka-console-consumer.sh --topic test --bootstrap-server localhost:9092

hello

nihao

2

3

至此,kafka 集群搭建完成。

# 部署 Logstash

在这里部署 Logstash 的作用是读取 kafka 中的信息,然后传递给我们的后端存储 ES,为了简化,我这里直接使用 Deployment 部署了。

制作镜像,Dockerfile 如下:

FROM centos:centos7

LABEL "auth"="rookieops" \

"mail"="rookieops@163.com"

ENV TIME_ZONE Asia/Shanghai

## install JAVA

ADD jdk-8u131-linux-x64.tar.gz /opt/

ENV JAVA_HOME /opt/jdk1.8.0_131

ENV PATH ${JAVA_HOME}/bin:${PATH}

## install logstash

ADD logstash-7.1.1.tar.gz /opt/

RUN mv /opt/logstash-7.1.1 /opt/logstash

2

3

4

5

6

7

8

9

10

11

12

13

(1)、定义 configMap 配置清单(logstash-config.yaml)

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-k8s-config

namespace: kube-ops

data:

containers.conf: |

input {

kafka {

codec => "json"

topics => ["test"]

bootstrap_servers => ["kafka-0.kafka-svc.kube-ops:9092, kafka-1.kafka-svc.kube-ops:9092, kafka-2.kafka-svc.kube-ops:9092"]

group_id => "logstash-g1"

}

}

output {

elasticsearch {

hosts => ["es-cluster-0.elasticsearch.kube-ops:9200", "es-cluster-1.elasticsearch.kube-ops:9200", "es-cluster-2.elasticsearch.kube-ops:9200"]

index => "logstash-%{+YYYY.MM.dd}"

}

}

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

(2)、定义 Deployment 配置清单(logstash.yaml)

kind: Deployment

metadata:

name: logstash

namespace: kube-ops

spec:

replicas: 1

selector:

matchLabels:

app: logstash

template:

metadata:

labels:

app: logstash

spec:

containers:

- name: logstash

image: registry.cn-hangzhou.aliyuncs.com/rookieops/logstash-kubernetes:7.1.1

volumeMounts:

- name: config

mountPath: /opt/logstash/config/containers.conf

subPath: containers.conf

command:

- "/bin/sh"

- "-c"

- "/opt/logstash/bin/logstash -f /opt/logstash/config/containers.conf"

volumes:

- name: config

configMap:

name: logstash-k8s-config

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

然后生成配置:

## kubectl apply -f logstash-config.yaml

## kubectl apply -f logstash.yaml

2

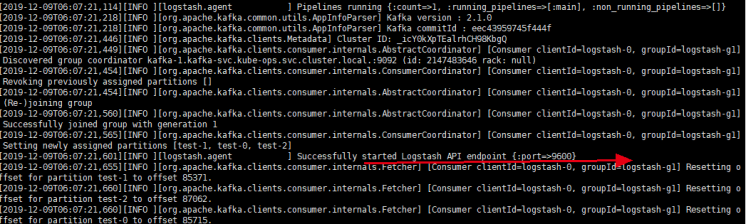

然后观察状态,查看日志:

## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

dingtalk-hook-856c5dbbc9-srcm6 1/1 Running 0 3d20h

es-cluster-0 1/1 Running 0 22m

es-cluster-1 1/1 Running 0 22m

es-cluster-2 1/1 Running 0 22m

kafka-0 1/1 Running 0 3h6m

kafka-1 1/1 Running 0 3h6m

kafka-2 1/1 Running 0 3h6m

kibana-7fc9f8c964-dqr68 1/1 Running 0 5d2h

logstash-678c945764-lkl2n 1/1 Running 0 10m

zk-0 1/1 Running 0 3d21h

zk-1 1/1 Running 0 3d21h

zk-2 1/1 Running 0 3d21h

2

3

4

5

6

7

8

9

10

11

12

13

14

# 部署 Fluentd

Fluentd 是一个高效的日志聚合器,是用 Ruby 编写的,并且可以很好地扩展。对于大部分企业来说,Fluentd 足够高效并且消耗的资源相对较少,另外一个工具 Fluent-bit 更轻量级,占用资源更少,但是插件相对 Fluentd 来说不够丰富,所以整体来说,Fluentd 更加成熟,使用更加广泛,所以我们这里也同样使用 Fluentd 来作为日志收集工具。

(1)、安装 fluent-plugin-kafka 插件

我这里的安装步骤是先起一个 fluentd 容器,然后安装插件,最后 commit 一下,再推送到仓库。具体步骤如下:

a、先用 docker 起一个容器

## docker run -it registry.cn-hangzhou.aliyuncs.com/rookieops/fluentd-elasticsearch:v2.0.4 /bin/bash

$ gem install fluent-plugin-kafka --no-document

2

b、退出容器,重新 commit 一下:

## docker commit c29b250d8df9 registry.cn-hangzhou.aliyuncs.com/rookieops/fluentd-elasticsearch:v2.0.4

c、将安装了插件的镜像推向仓库:

## docker push registry.cn-hangzhou.aliyuncs.com/rookieops/fluentd-elasticsearch:v2.0.4

(2)、定义 Fluentd 的 configMap(fluentd-config.yaml)

kind: ConfigMap

apiVersion: v1

metadata:

name: fluentd-config

namespace: kube-ops

labels:

addonmanager.kubernetes.io/mode: Reconcile

data:

system.conf: |-

<system>

root_dir /tmp/fluentd-buffers/

</system>

containers.input.conf: |-

<source>

@id fluentd-containers.log

@type tail

path /var/log/containers/*.log

pos_file /var/log/es-containers.log.pos

time_format %Y-%m-%dT%H:%M:%S.%NZ

localtime

tag raw.kubernetes.*

format json

read_from_head true

</source>

## Detect exceptions in the log output and forward them as one log entry.

<match raw.kubernetes.**>

@id raw.kubernetes

@type detect_exceptions

remove_tag_prefix raw

message log

stream stream

multiline_flush_interval 5

max_bytes 500000

max_lines 1000

</match>

system.input.conf: |-

## Logs from systemd-journal for interesting services.

<source>

@id journald-docker

@type systemd

filters [{ "_SYSTEMD_UNIT": "docker.service" }]

<storage>

@type local

persistent true

</storage>

read_from_head true

tag docker

</source>

<source>

@id journald-kubelet

@type systemd

filters [{ "_SYSTEMD_UNIT": "kubelet.service" }]

<storage>

@type local

persistent true

</storage>

read_from_head true

tag kubelet

</source>

forward.input.conf: |-

## Takes the messages sent over TCP

<source>

@type forward

</source>

output.conf: |-

## Enriches records with Kubernetes metadata

<filter kubernetes.**>

@type kubernetes_metadata

</filter>

<match **>

@id kafka

@type kafka2

@log_level info

include_tag_key true

brokers kafka-0.kafka-svc.kube-ops:9092,kafka-1.kafka-svc.kube-ops:9092,kafka-2.kafka-svc.kube-ops:9092

logstash_format true

request_timeout 30s

<buffer>

@type file

path /var/log/fluentd-buffers/kubernetes.system.buffer

flush_mode interval

retry_type exponential_backoff

flush_thread_count 2

flush_interval 5s

retry_forever

retry_max_interval 30

chunk_limit_size 2M

queue_limit_length 8

overflow_action block

</buffer>

## data type settings

<format>

@type json

</format>

## topic settings

topic_key topic

default_topic test

## producer settings

required_acks -1

compression_codec gzip

</match>

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

(3)、定义 DeamonSet 配置清单(fluentd-daemonset.yaml)

apiVersion: v1

kind: ServiceAccount

metadata:

name: fluentd-es

namespace: kube-ops

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: fluentd-es

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "namespaces"

- "pods"

verbs:

- "get"

- "watch"

- "list"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: fluentd-es

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: fluentd-es

namespace: kube-ops

apiGroup: ""

roleRef:

kind: ClusterRole

name: fluentd-es

apiGroup: ""

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: fluentd-es

namespace: kube-ops

labels:

k8s-app: fluentd-es

version: v2.0.4

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: fluentd-es

version: v2.0.4

template:

metadata:

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

version: v2.0.4

## This annotation ensures that fluentd does not get evicted if the node

## supports critical pod annotation based priority scheme.

## Note that this does not guarantee admission on the nodes (#40573).

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ""

spec:

serviceAccountName: fluentd-es

containers:

- name: fluentd-es

image: registry.cn-hangzhou.aliyuncs.com/rookieops/fluentd-elasticsearch:v2.0.4

command:

- "/bin/sh"

- "-c"

- "/run.sh $FLUENTD_ARGS"

env:

- name: FLUENTD_ARGS

value: --no-supervisor -q

resources:

limits:

memory: 500Mi

requests:

cpu: 100m

memory: 200Mi

volumeMounts:

- name: varlog

mountPath: /var/log

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

- name: config-volume

mountPath: /etc/fluent/config.d

nodeSelector:

beta.kubernetes.io/fluentd-ds-ready: "true"

tolerations:

- key: node-role.kubernetes.io/master

operator: Exists

effect: NoSchedule

terminationGracePeriodSeconds: 30

volumes:

- name: varlog

hostPath:

path: /var/log

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: config-volume

configMap:

name: fluentd-config

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

创建配置清单:

## kubectl apply -f fluentd-daemonset.yaml

## kubectl apply -f fluentd-config.yaml

## kubectl get pod -n kube-ops

NAME READY STATUS RESTARTS AGE

dingtalk-hook-856c5dbbc9-srcm6 1/1 Running 0 3d21h

es-cluster-0 1/1 Running 0 112m

es-cluster-1 1/1 Running 0 112m

es-cluster-2 1/1 Running 0 112m

fluentd-es-jvhqv 1/1 Running 0 4h29m

fluentd-es-s7v6m 1/1 Running 0 4h29m

kafka-0 1/1 Running 0 4h36m

kafka-1 1/1 Running 0 4h36m

kafka-2 1/1 Running 0 4h36m

kibana-7fc9f8c964-dqr68 1/1 Running 0 5d4h

logstash-678c945764-lkl2n 1/1 Running 0 100m

zk-0 1/1 Running 0 3d23h

zk-1 1/1 Running 0 3d23h

zk-2 1/1 Running 0 3d23h

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

至此,整套流程搭建完了,然后我们进入一台 kafka 容器,我们查看 consumer 消息,如下:

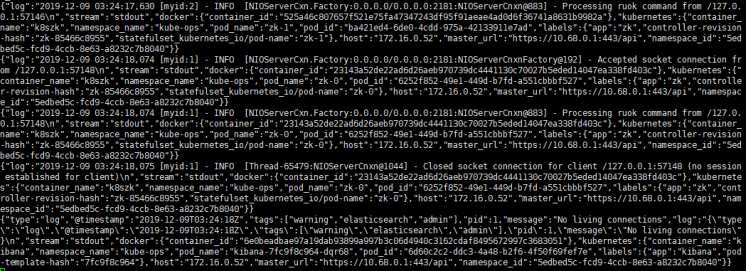

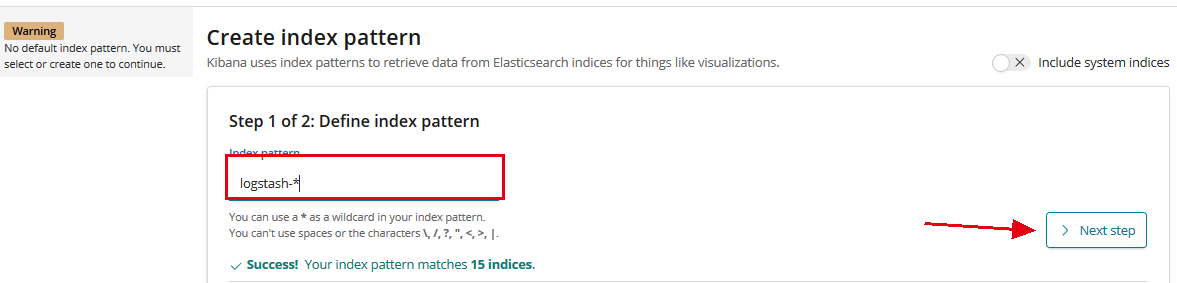

然后进入 kibana,先创建索引,由于我们在 logstash 的配置文件中定义了索引为 logstash-%{+YYYY.MM.dd},所以我们创建索引如下:

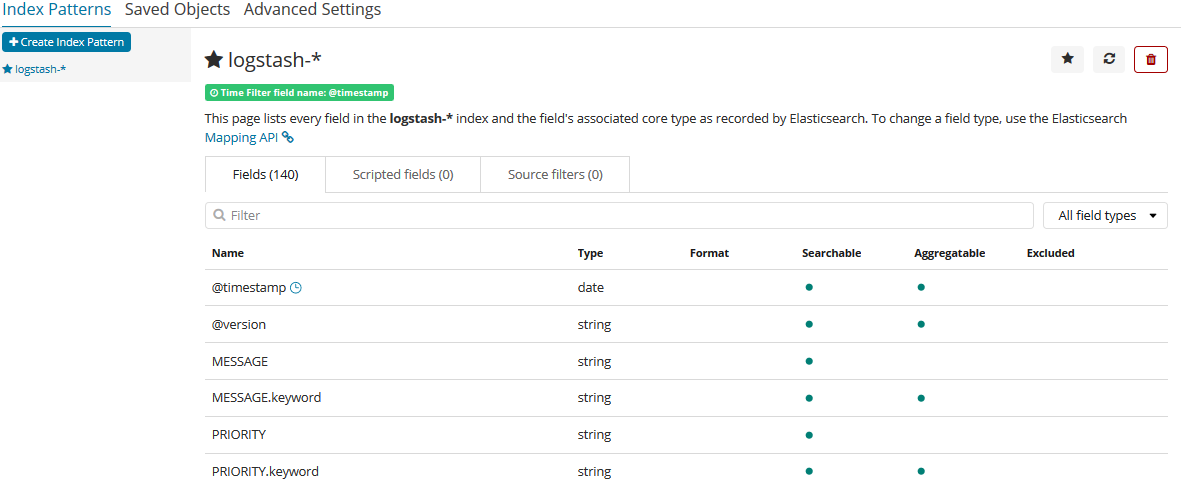

创建成功后如下:

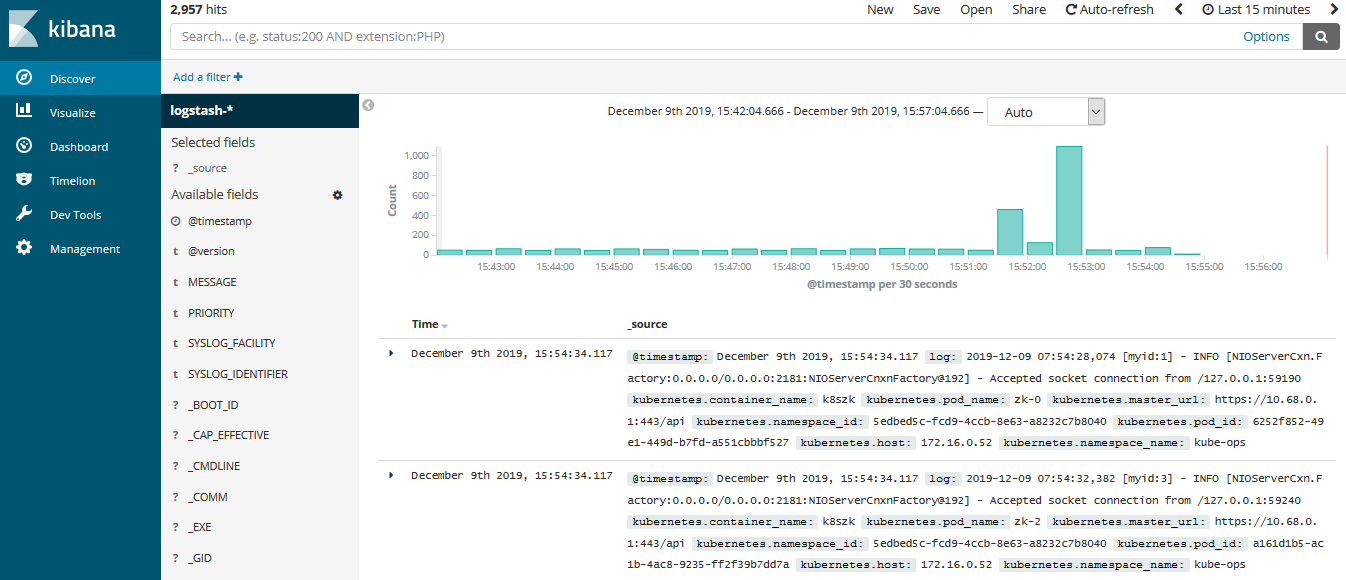

然后我们查看日志信息,如下:

到此,整个日志收集系统搭建完成。